Sitecore

Azure

Hina Garg

Architect

Sitecore Commerce Using Azure Blob Storage

Store and access scalable data.

In this blog post, I would like to share some of the findings on the usage of Azure Blob storage. Azure Blob storage is Microsoft's object storage solution for the cloud. It is optimized for storing massive amounts of unstructured data. If we come across a situation where-in, we must store data greater than the size of 64 KB, Azure queues might not be an ideal choice. In such scenarios we should consider using Azure Storage Blobs. To work with it we need to get familiar with few basic terminologies like Storage, Containers and Blobs.

Storage: A storage account provides a unique namespace in Azure for your data. Every object that you store in Azure Storage has an address that includes your unique account name.

Containers: A container organizes a set of blobs, similar to a directory in a file system. A storage account can include an unlimited number of containers, and a container can store an unlimited number of blobs.

Blobs: Azure Storage supports three types of blobs but today we are going to focus on Block blobs. It stores text and binary data. Block blobs are made up of blocks of data that can be managed individually. Block blobs can store up to about 190.7 TiB

Now let’s take an example to understand the implementation and usage of the Blobs.

Example Scenario: Let’s say that we are required to create a scheduled job that would export the abandoned cart data of users visiting a shopping website into an excel or CSV file. Later this data would be used for reporting and analytics purposes.

Let’s use the below mentioned technical solutions to meet the requirements of the given scenario.

- Sitecore

- Sitecore Commerce

- Azure Blob Storage

Approach

- When a user adds an item to the cart, an event is triggered that would invoke an action pipeline in Sitecore’s Storefront solution.

- This action would call the corresponding method in the Sitecore Commerce solution through commerce connect API and pass the CartID as a parameter.

- In the commerce solution, the CartID would be used to fetch the cart lines with the product information that is required to be stored in the blob and finally to export into a CSV file.

- Next, a minion would run to consume the blobs and export the data at a regular interval of time.

Implementation

For the first two steps stated in the above approach, look at the below blog posts for more information:

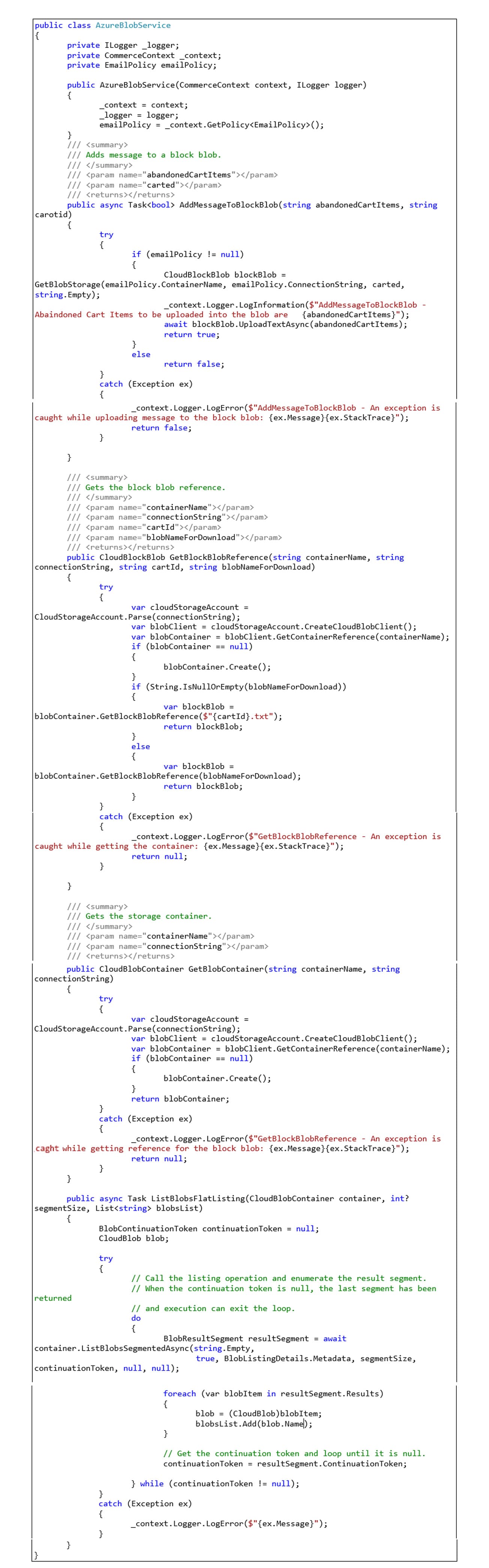

Below are the helper functions that would facilitate the 3rd step in the stated approach:

Stay tuned for the 4th step, as I explain it in more detail in an upcoming blog post.

If you're looking for a solution to store large amount of data on Azure Storage, this blog post should help, else you can reach out for more info.